Vision for Ackermann vehicles

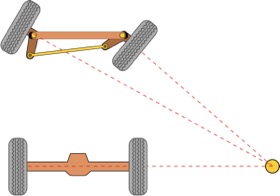

One of the particularities of the motion of many ground vehicle platforms is that they employ the Ackermann steering mechanism. Using this concept, they can get away with only a single motor and only one additional degree of freedom for steering. In its most common form, the steering mechanism simply acts on the front shaft of a four-wheel vehicle. As illustrated in the figure besides (source: Wikipedia), the steering mechanism is designed such that the wheels exert minimal slippage even if the vehicle enters a turn, a condition that is generated by making sure that the steering angles for the individual front wheels are coordinated such that the latter are all moving tangential to a circle that has an identical centre, which is called the Instantaneous Centre of Rotation (ICR). The ICR is furthermore located along the axis of the non-steering back-wheel shaft and in consequence also the back wheels are moving along circles centred on the ICR.

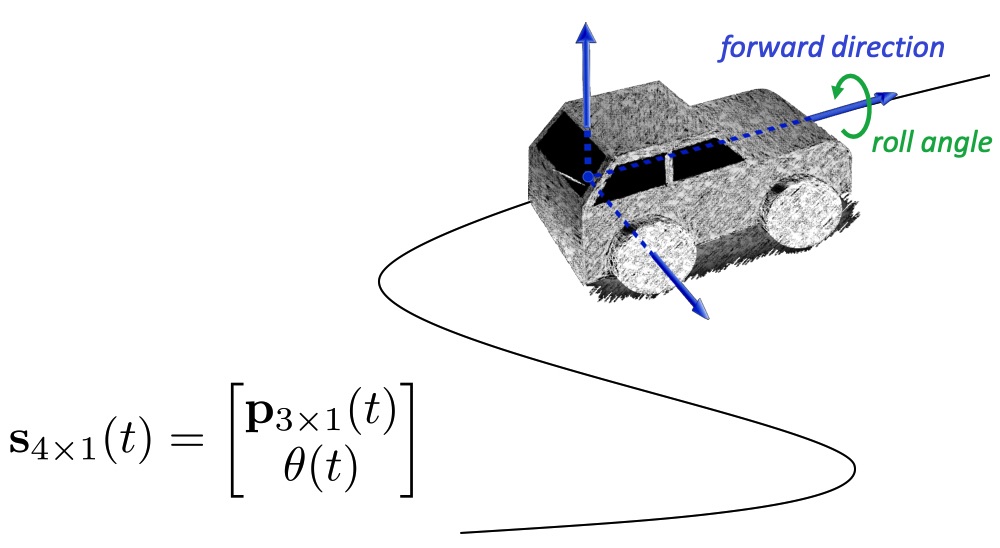

An important consequence of the Ackermann steering model is that the motion of the platform becomes non-holonomic. In simple terms, non-holonomic means that the number of actuators of the vehicle is less than the degrees of freedom in the space in which the vehicle is moving. In simple terms, a car cannot just move side-ways, but always needs to move–at least instantaneously–along a circular arc of which the centre is located along the back-wheel axis. Our idea is to exploit this special motion model in order to constrain the motion of the vehicle. By employing lower-dimensional models that respect the Ackermann steering mechanism, we can often obtain simpler and thus also more robust solutions to vehicle estimation problems. We have treated a series of non-holonomic vehicle relative pose problems for various camera setups (monocular forward facing, monocular downward facing, event cameras [downward-facing], 360-degree MPCs, and articulated MPCs). We have furthermore developed large-scale structure-from-motion parametrizations for non-holonomic vehicles, as well as exploited the motion constraints for motion-dependent, automatic self-calibration of a 360-degree MPC.

Relative pose estimation for Ackermann vehicles

MPL has worked on a large number of relative pose algorithms for non-holonomic, Ackermann vehicles. The following lists the individual contributions along with the particular camera system and configuration that it aims at:

Single forward-facing camera:

K Huang, Y Wang, and L Kneip. Motion estimation of non-holonomic ground vehicles from a single feature correspondence measured over n views. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, USA, June 2019 [pdf] [youtube] [bilibili]

In this work, we demonstrate the use of a constant velocity motion model to parametrize the poses of a dense sequence of multiple frames. Based on the Ackermann motion model, they will notably be captured along a circular arc. Given that the single camera case is scale invariant, the method optimizes only the rotational velocity from the feature tracks measured across those image sequences. In simple terms, the method extends 1-point Ransac for non-holonomic vehicles to the multiple view case.

Single downward-facing camera:

L. Gao, J. Su, J. Cui, X. Zeng, X. Peng, and L. Kneip. Efficient globally-optimal correspondence-less visual odometry for planar ground vehicles. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020 [pdf] [youtube] [bilibili]

In this work, we analyse the feasibility of estimating Ackermann motion with a downward facing camera that exerts fronto-parallel motion with respect to the ground plane. The concept is indeed similar to the functioning of a mouse sensor. This turns the motion estimation into a simple image registration problem in which we only have to identify a 2-parameter planar homography. However, one difficulty that arises from this setup is that ground-plane features are indistinctive and thus hard to match between successive views. We encountered this difficulty by introducing the first globally-optimal, correspondence-less solution to plane-based Ackermann motion estimation by exploiting the branch-and-bound optimisation technique. Through the low-dimensional parametrisation, a derivation of tight bounds, and an efficient implementation, we demonstrate how this technique is eventually amenable to accurate real-time motion estimation.

Single downward-facing event camera:

X. Peng, Y. Wang, L. Gao, and L. Kneip. Globally-optimal event camera motion estimation. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, August 2020 [pdf] [youtube] [bilibili]

X. Peng, L. Gao, Y. Wang, and L. Kneip. Globally-Optimal Contrast Maximisation for Event Cameras. IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI), 2021.

The setup of this work is similar to the previous one, except that the employed camera is now an event camera (i.e. dynamic vision sensor). The method furthermore does not extract features on the ground plane, but features are simply given by a much denser set of events expressed within the 3D space given by the image coordinates and timestamps. The solution therefore is not formulated as a maximization of the cardinality of the set of pair-wise correspondences, but a spatio-temporal registration problem in which the entire event stream is aligned by a continuous-time formulation of homographic warping and a maximiation of the contrast in the resulting image of unwarped events (i.e. maximum contrast expresses maximal alignment). The problem is again solved globally optimally by employing the branch-and-bound optimization technique. For more information on this, please visit our page on event camera research.

Single forward-facing event camera:

W. Xu, S. Zhang, L. Cui, X. Peng, and L. Kneip. Event-based visual odometry on non-holonomic ground vehicles. In Proceedings of the International Conference on 3D Vision (3DV), 2024. [pdf] [code] [video]

A straightforward extension of our work by Huang et al. in CVPR'19 to the case of an event camera. By projection onto the horizontal plane and utilization of n-linearities, single event trails can again be exploited in order to form motion hypotheses. However, rather than relying on the exact equations of Viete and Weierstrass, the present work simply relies on Taylor approximations of trigonometric functions.

360-degree surround-view MPC:

Y. Wang, K. Huang, X. Peng, H. Li, and L. Kneip. Reliable frame-to-frame motion estimation for vehicle-mounted surround- view camera systems. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020 [youtube] [bilibili]

Modern vehicles are often equipped with a surround-view multi-camera system. The current interest in autonomous driving invites the investigation of how to use such systems for a reliable estimation of relative vehicle displacement. Many camera pose algorithms either work for a single camera, make simplifying assumptions (perfect, constant-velocity Ackermann motion such as the algorithms above), or simply become degenerate under non-holonomic vehicle motion (solvers relying on the generalized essential matrix). In this work, we introduce a general and reliable solution able to handle all kinds of relative displacements in the plane despite the use of an MPC and the possibly non-holonomic characteristics of the ground plane motion. While the work is not directly depending on the Ackermann motion model, it solves for a single rotational degree of freedom and is therefore perfectly applicable to Ackermann vehicles.

Articulated MPC:

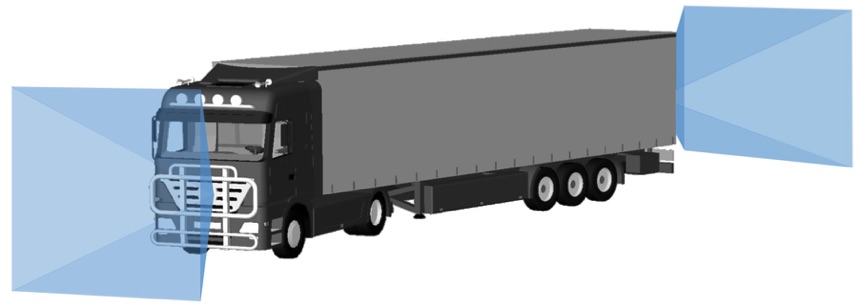

X Peng, J Cui, and L Kneip. Articulated multi-perspective cameras and their application to truck motion estimation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Macau, China, November 2019 [youtube] [bilibili]

An intricate case of a Multi-Perspective Camera (MPC) occurs when the cameras are distributed over an articulated body, a scenario that for example occurs in vision-based applications on a truck where additional cameras have been installed on the trailer for the sake of reinstalling omni-direction perception abilities. We call the result an Articulated Multi-Perspective Camera (AMPC). In this work, we have shown that AMPC motion renders the internal articulation joint state observable, and optimization over all parameters (i.e. relative displacement and joint configuration both before and after a displacement) is possible and enhances motion estimation accuracy with respect to using the cameras on each rigid part alone. In particular, the solution again employs the Ackermann steering model, which is valid even for the trailer. As a result, the articulation joint angle both before and after a relative displacement of the entire truck can be recovered from a single feature correspondence across the trailer camera. For more information and a video, please visit the page on 360-degree multi-perspective cameras.

Large-scale structure-from-motion for Ackermann vehicles

The Instantaneous Centre of Rotation (ICR) is denoted instantaneous because it in fact expresses a relationship between the radius of the arc and the first order derivatives of the motion, which are the translational and the rotational velocity. The above relative pose solvers all rely on the assumption of temporarily constant velocities, which enables the formulation of relative pose constraints. It must be noted though that this is only an approximation, as velocities are typically not constant, and the surface on which the vehicle moves is typically not a perfectly horizontal plane.

Rather than using approximate planar motion models or simple, pair-wise regularization terms, we demonstrate the use of continuous-time B-splines for an exact imposition of smooth, non-holonomic trajectories inside 6 DoF bundle adjustment. The splines enforce the trajectory of the vehicle to remain smooth and enable us to set the vehicle heading to the first-order derivative of this trajectory (i.e. the tangential of the curve). The work again shows that a significant increase in robustness can be achieved if taking non-holonomic kinematic constraints on the vehicle motion into account. This is especially true in degrading visual conditions.

K. Huang, Y. Wang, and L. Kneip. B-splines for Purely Vision-based Localization and Mapping on Non-holonomic Ground Vehicles. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2021 [pdf] [youtube] [bilibili]

Self-calibration of extrinsics for Ackermann vehicles

An important problem towards the practical use of 360-degree surround view cameras is extrinsic calibration between the cameras, which is challenging due to the often reduced overlap between the fields of view of neighbouring views. The present work is motivated by two insights. First, we argue that the accuracy of vision-based vehicle motion estimation depends crucially on the quality of exterior orientation calibration, while design parameters for camera positions typically provide sufficient accuracy. Second, we demonstrate how planar vehicle motion related direction vectors can be used to accurately identify individual camera-to-vehicle rotations, which are more useful than the commonly and tediously derived camera-to-camera transformations. It is in particular for Ackermann vehicles that the translation direction in the zero rotation case becomes equivalent to the vehicle forward direction.

Z. Ouyang, L. Hu, Y. Lu, Z. Wang, X. Peng, and L. Kneip. Online calibration of exterior orientations of a vehicle-mounted surround-view camera system. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020