Continuous-time formulations

Asynchronously or temporally dense sampling sensors such as Inertial Measurement Units (IMUs) or Lidars are difficult to embed into sparse, keyframe-based optimization methods as the latter optimize poses for relatively few time instants only, but there are many individual measurements each sampled at their individual time. A commonly employed solution to this problem is given by manifold pre-integration, which is primarily employed with Inertial Measurement Units (IMUs). MPL has worked on a series of works that employ velocity parameters for relative pose estimation problems, or otherwise B-splines for continuous-time trajectory optimization in the context of camera calibration. We furthermore have employed B-splines for explicit non-holonomic trajectory parametrizations.

Continuous-time formulations for rolling shutter cameras:

Rolling shutter cameras sample row-by-row, and continuous-time parametrizations therefore enable each point measurement to be processed at its exact sampling time depending on the row in which the point is located. We use this parametrization for rolling shutter camera calibration, which includes calibration of the line-delay parameter. For more information, visit the camera calibration page.

L Oth, P T Furgale, L Kneip, and R Siegwart. Rolling shutter camera calibration. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Portland, USA, June 2013 [pdf]

Based on a constant velocity motion model, we have furthermore collaborated on the development of a novel relative pose solver for rolling shutter images. A complete analysis of epipolar geometry generalized for the case of rolling shutter cameras can be found in the following works:

Y Dai, H Li, and L Kneip. Rolling shutter camera relative pose: Generalized epipolar geometry. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, USA, June 2016 [pdf]

Continuous-time formulations for non-holonomic vehicles:

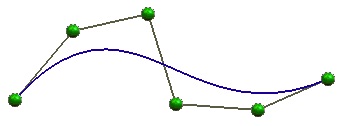

We demonstrate the use of continuous-time B-splines for an exact imposition of smooth, non-holonomic trajectories inside 6 DoF bundle adjustment. The splines enforce the trajectory of the vehicle to remain smooth and at the same time enable us to set the vehicle heading to the first-order derivative of this trajectory (i.e. the tangential of the curve). For more information on non-holonomic vehicle motion estimation, please visit our page on Ackermann vehicles.

K. Huang, Y. Wang, and L. Kneip. B-splines for Purely Vision-based Localization and Mapping on Non-holonomic Ground Vehicles. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2021 [youtube] [bilibili]

In another work, we demonstrate the use of a constant velocity motion model to parametrize the poses of a dense sequence of multiple frames. Based on the Ackermann motion model, they will notably be captured along a circular arc. Given that the single camera case is scale invariant, the method optimizes only the rotational velocity from the feature tracks measured across those image sequences. In simple terms, the method extends 1-point Ransac for non-holonomic vehicles to the multiple view case.

K Huang, Y Wang, and L Kneip. Motion estimation of non-holonomic ground vehicles from a single feature correspondence measured over n views. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, USA, June 2019 [pdf] [youtube] [bilibili]

Continuous-time formulations for event cameras:

The below works look at several motion estimation problems with event cameras. The flow of the events is hereby modelled by a general, continuous-time velocity depending homographic warping in a space-time volume, and the objective is formulated as a maximisation of contrast within the image of warped events.

X. Peng, Y. Wang, L. Gao, and L. Kneip. Globally-optimal event camera motion estimation. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, August 2020 [pdf] [youtube] [bilibili]

X. Peng, L. Gao, Y. Wang, and L. Kneip. Globally-Optimal Contrast Maximisation for Event Cameras. IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI), 2021.

We present the first dynamic event camera calibration algorithm. It calibrates directly from events captured during relative motion between an event camera and a regular calibration pattern. The method is propelled by a novel feature extraction mechanism for calibration patterns, and leverages existing calibration tools before optimizing all parameters through a multi-segment continuous-time formulation. For more information, please visit our page on event camera research. Please also note that this work has been released open-source, and the code link can be found below.

K. Huang, Y. Wang, and L. Kneip. Dynamic Event Camera Calibration. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), 2021b. [pdf] [code] [youtube] [bilibili]