Camera architectures

While the most fundamental case of vision based perception uses only a single camera, monocular SLAM poses significant challenges given by an often reduced field of view, the resulting difficulty of distinguishing between rotational and translation motion patterns, and the mere lack of direct depth measurements. For practical applications, we therefore often choose alternative camera architectures. Besides monocular solutions, MPL has contributed to significant research advancements with the below listed visual sensors/sensor systems.

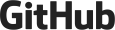

Stereo vision

A highly popular choice in many existing smart mobile systems is given by a stereo camera. A stereo camera consists of two synchronized cameras that are laterally displaced with respect to each other. By knowing the extrinsic transformation between both views, the result of either sparse or dense image matching may be readily converted into depth cues. Thus, a stereo vision system does not depend on kinematic depth measurements, but can instantaneously provide metric depth information for different points in the scene. As a result, stereo vision frameworks often perform more robustly than monocular alternatives. They furthermore do not suffer from scale unobservability as long as the ratio between baseline of the two cameras and the average depth of the scene does not become too small. MPL's director has contributed to two stereo frameworks designated for VSLAM (Visual Simultaneous Localization And Mapping) running on embedded drone-mounted hardware and an underwater system, respectively. More recently, there have also been contributions to event-based stereo cameras as well as hybrid stereo setups that involve one event camera and one regular RGB or depth camera.

R. Voigt, J. Nikolic, C. Hürzeler, S. Weiss, L. Kneip, and R. Siegwart. Robust embedded egomotion estimation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), San Francisco, USA, September 2011 [pdf]

J. Zhang, V. Ila, and L. Kneip. Robust visual odometry in underwater environment. In OCEANS18 MTS/IEEE Kobe, Kobe, Japan, May 2018 [pdf]

Y. Zhou, G. Gallego, H. Rebecq, L. Kneip, H. Li, and D. Scaramuzza. Semi-dense 3d reconstruction with a stereo event camera. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, September 2018. [pdf]

Y. Zuo, L. Cui, X. Peng, Y. Xu, S. Gao, X. Wang, and L. Kneip. Accurate Depth Estimation from a Hybrid Event-RGB Stereo Setup. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), 2021.

Y. Zuo, J. Yang, J. Chen, X. Wang, Y. Wang, and L. Kneip. DEVO: Depth-Event Camera Visual Odometry in Challenging Conditions. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2022. [pdf] [video]

RGBD cameras

Another highly convenient way of retrieving instantaneous depth estimation, and thus simplify the Simultaneous Localization And Mapping problem is given by employing a depth camera such as the Microsoft Kinect. MPL has been involved in the development of several RGBD camera tracking solutions which operate highly efficiently by exploiting structural regularities such as piece-wise planar environment geometries or Manhattan world arrangements. More recently, we have published another highly efficient approach that does not make any assumptions about the environment, but speeds up the calculation by extracting and tracking semi-dense features. In our most recent work, we present spatial AI paradigms that aim at modelling scenes at the level of objects. Please visit our page on Visual SLAM to see demos of the developed frameworks.

Y. Zhou, L. Kneip, and H. Li. Real-Time Rotation Estimation for Dense Depth Sensors in Piece-wise Planar Environments. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Deajeon, Korea, October 2016 [pdf] [video]

Y. Zhou, L. Kneip, C. Rodriguez, and H. Li. Divide and conquer: Efficient density-based tracking of 3d sensors in manhattan worlds. In Proceedings of the Asian Conference on Computer Vision (ACCV), Taipei, Taiwan, November 2016. Oral presentation [pdf] [code]

Y. Zhou, L. Kneip, and H. Li. Semi-dense Visual Odometry for RGB-D Cameras using Approximate Nearest Neighbour Fields. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Singapore, May 2017 [pdf]

Y. Zhou, H. Li, and L. Kneip. Canny-VO: Visual Odometry with RGB-D Cameras based on Geometric 3D-2D Edge Alignment. IEEE Transactions on Robotics (T-RO), 35(1):1–16, 2019 [pdf]

L. Hu, Y. Cao, P. Wu, and L. Kneip. Dense object reconstruction from rgbd images with embedded deep shape representations. In Asian Conference on Computer Vision (ACCV), Workshop on RGB-D - sensing and understanding via combined colour and depth, Perth, Australia, December 2018 [pdf]

L. Hu, W. Xu, K. Huang, and L. Kneip. Deep-SLAM++: object-level RGBD SLAM based on class-specific deep shape priors. ArXiv e-prints, 2019 [pdf]

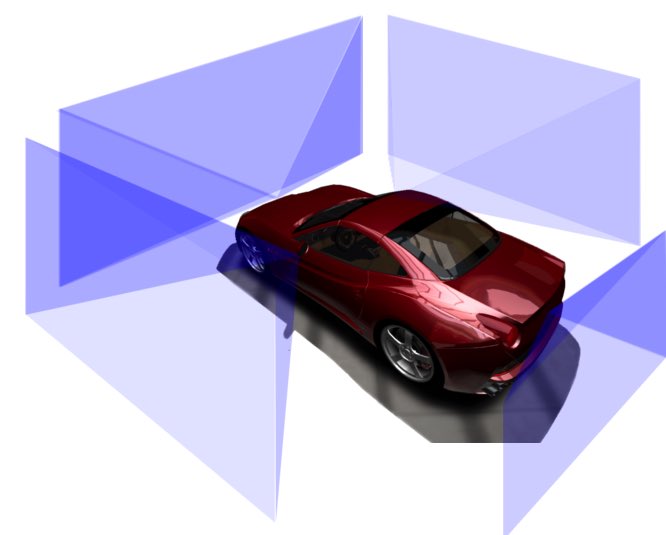

Multi-perspective cameras

Since Prof. Kneip's early involvement in the EU FP7 project V-Charge, MPL has continued to investigate pose estimation and VSLAM based on multi-perspective camera systems (MPCs), particularly those for which the cameras share only very little overlap in their fields of view. The system commonly occurs on passenger vehicles, which are often equipped with a 360-degree surround view camera system for parking assistance. Given the close-to-market nature of these sensors, it becomes an economically relevant question whether or not MPCs can be used for VSLAM and the solution of certain vehicle autonomy problems. While the tracking accuracy and robustness of vision-based solutions can hardly compete with Lidar based solutions, they may already be enough to solve certain less safety-critical applications such as autonomous valet parking. Perhaps somewhat surprisingly, MPCs possess the ability to render metric scale observable despite potentially having no overlap in their fields of view. Surround-view MPCs also share the same benefits than any large field-of-view camera, which is a good ability to distinguish rotational and translation motion patterns, and high tracking robustness. MPL is among the global leaders in the handling of MPCs, and has put strong emphasis on the development of fundamental geometric pose calculation algorithms for non-central (including multi-perspective) camera systems. More information on this can be found on our research page on geometric solvers, and most solvers can be found in our open-source project OpenGV. We have furthermore published simple visual odometry, full SLAM, and online calibration solutions for surround-view MPCs. Please visit our page on 360-degree Multi-perspective cameras to see demos of the developed frameworks.

T. Kazik, L. Kneip, J. Nikolic, M. Pollefeys, and R. Siegwart. Real-time 6D stereo visual odometry with non-overlapping fields of view. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, USA, June 2012 [pdf] [video]

Y. Wang and L. Kneip. On scale initialization in non-overlapping multi-perspective visual odometry. In Proceedings of the International Conference on Computer Vision Systems, Shenzhen, July 2017. Best Student Paper Award

Y. Wang, K. Huang, X. Peng, H. Li, and L. Kneip. Reliable frame-to-frame motion estimation for vehicle-mounted surround-view camera systems. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020 [youtube] [bilibili]

Z. Ouyang, L. Hu, Y. Lu, Z. Wang, X. Peng, and L. Kneip. Online calibration of exterior orientations of a vehicle-mounted surround-view camera system. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020.

Y. Wang, W. Jiang, K. Huang, S. Schwertfeger, and L. Kneip. Accurate calibration of multi-perspective cameras from a generalization of the hand-eye constraint. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2022. [pdf] [youtube] [code]

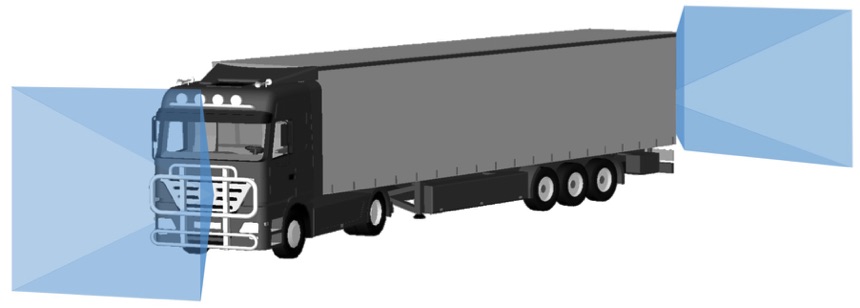

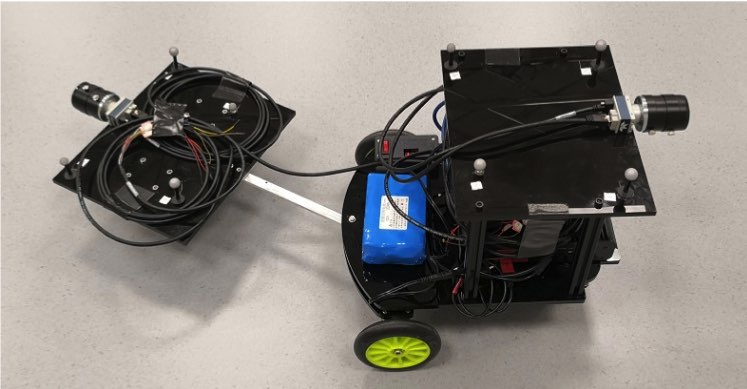

Articulated or non-rigid multi-perspective cameras

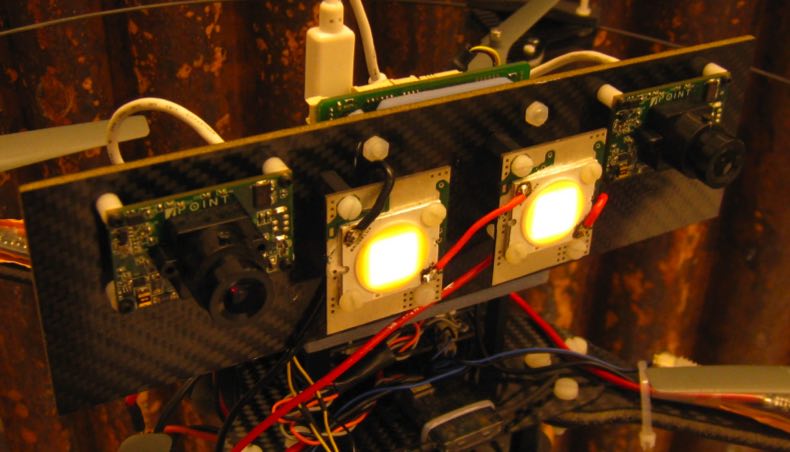

An intricate case of a Multi-Perspective Camera (MPC) occurs when the cameras are distributed over an articulated body, a scenario that for example occurs in vision-based applications on a truck where additional cameras have been installed on the trailer for the sake of reinstalling omni-directional perception abilities. We call the result an Articulated Multi-Perspective Camera (AMPC). Through our research, we have shown that AMPC motion renders the internal articulation joint state observable, and optimization over all parameters (i.e. relative displacement and joint configuration both before and after a displacement) is possible and enhances motion estimation accuracy with respect to using the cameras on each rigid part alone. A demo of the framework can be found on our page on 360-degree Multi-perspective cameras. Another exciting case that we looked into is the flexible robot scenario, where multiple cameras are distributed over an elastically deformable body. Suprisingly, by including a physical deformation model of the carrier platform, we can latently observe inertial variables such as the alignment with respect to gravity in parallel to camera motion. We call this a Non-rigid multi-perspective camera system.

X. Peng, J. Cui, and L. Kneip. Articulated multi-perspective cameras and their application to truck motion estimation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Macau, China, November 2019. [youtube] [bilibili]

M. Li, J. Yang, and L. Kneip. Relative pose for nonrigid multi-perspective cameras: The static case. In Proceedings of the International Conference on 3D Vision (3DV), 2024. [pdf]

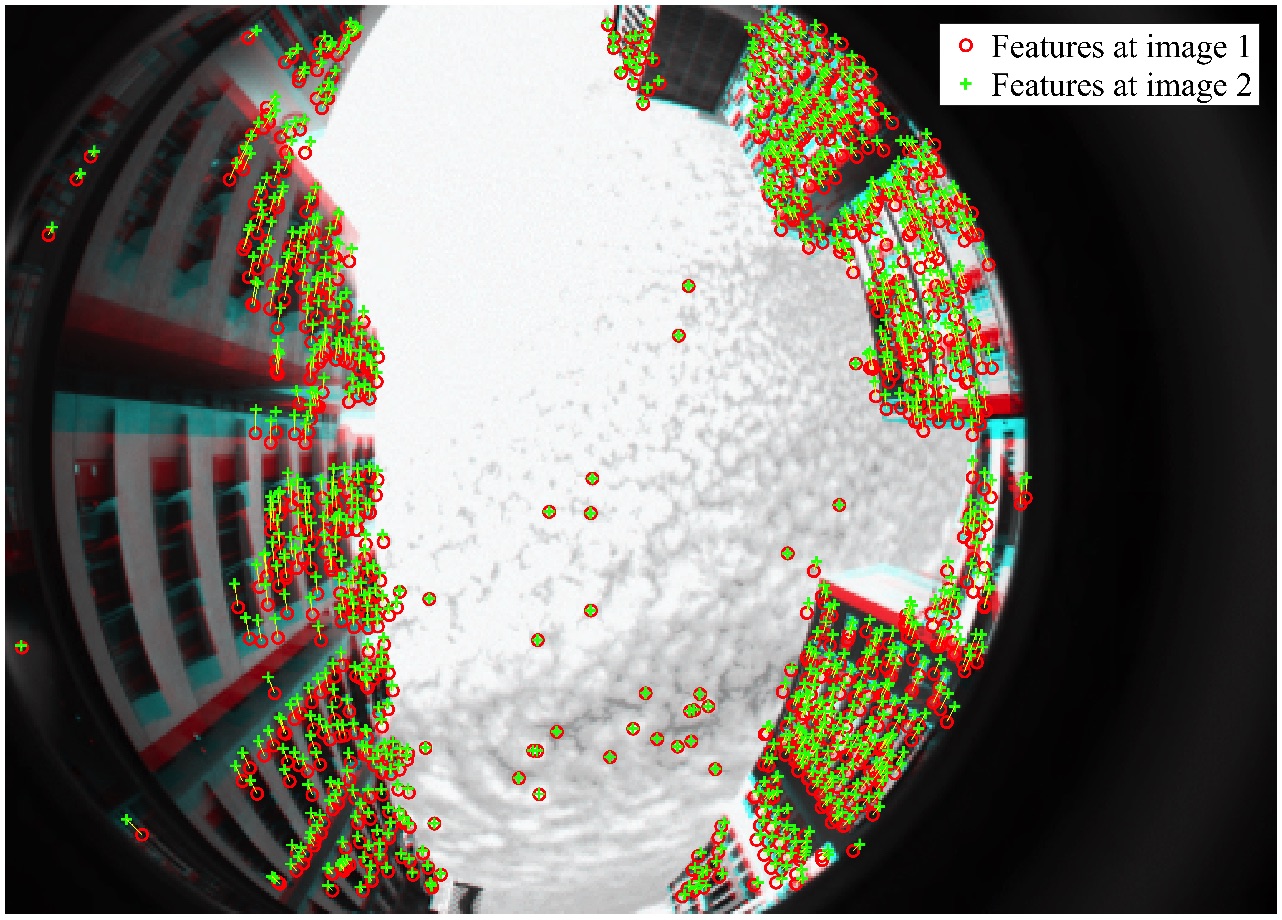

Omni-directional cameras

Similar to MPCs, omni-directional cameras (e.g. catadioptric cameras) have the advantage of multi-directional observations given by an enlarged field of view. The resulting benefits are a potential increase in the available features for tracking and the distinctiveness of rotational and translation induced optical flow patterns. Omni-directional cameras therefore have the potential advantage of improved motion estimation accuracy and robustness over regular, monocular cameras. One particularity of omni-directional cameras is that the field of view may exceed 180 degrees, and that–as a result–normalized measurements may have to be expressed as a general 3D bearing vector rather than a 2D (homogeneous) point on the normalized image plane. It is worthwhile to note that all algorithms in OpenGV have been consequently designed to operate with 3D bearing vectors, and are thus ready to be applied to (calibrated) omni-directional cameras. We have furthermore collaborated on a full, MSCKF based visual odometry framework for omni-directional measurements.

M. Ramezani, K. Koshelham, and L. Kneip. Omnidirectional Visual-Inertial Odometry Using Multi-State Constraint Kalman Filter. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Vancover, Canada, September 2017 [pdf]

Event cameras

Regular cameras suffer from disadvantages under certain conditions. For example, they are unable to capture blur-free images in highly dynamic or low illumination conditions. They are also unable to produce clear images when the image faces different parts of a scene with substantially different illumination. At MPL, we have have started to investigate a still relatively new, bio-inspired visual sensor called an event camera or dynamic vision sensor. It reports pixel-level image changes rather than absolute intensities. In particular, the image changes are reported asynchroneously and at a very high temporal resolution. Though the potential of event cameras in highly dynamic or challenging illumination conditions is somewhat clear, the complicated nature of the sensor data makes reliable, real-time SLAM a particularly hard problem to be solved. MPL has contributed to novel algorithms for event-based SLAM, mapping, pose estimation, and sensor calibration. Please visit our page on event camera research for more information on this topic.

Y. Zhou, G. Gallego, H. Rebecq, L. Kneip, H. Li, and D. Scaramuzza. Semi-dense 3d reconstruction with a stereo event camera. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, September 2018 [pdf]

X. Peng, Y. Wang, L. Gao, and L. Kneip. Globally-optimal event camera motion estimation. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, August 2020 [pdf] [youtube] [bilibili]

X. Peng, L. Gao, Y. Wang, and L. Kneip. Globally-Optimal Contrast Maximisation for Event Cameras. IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI), 2021 [pdf]

K. Huang, Y. Wang, and L. Kneip. Dynamic Event Camera Calibration. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Prague, Czech republic, September 2021 [pdf] [code] [youtube] [bilibili]

Y. Zuo, L. Cui, X. Peng, Y. Xu, S. Gao, X. Wang, L. Kneip. Accurate Depth Estimation from a Hybrid Event-RGB Stereo Setup. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Prague, Czech republic, September 2021.

X. Peng, W. Xu, J. Yang, and L. Kneip. Continuous Event-Line Constraint for Closed-Form Velocity Initialization. In Proceedings of the British Machine Vision Conference (BMVC), 2021. [pdf]

Y. Zuo, J. Yang, J. Chen, X. Wang, Y. Wang, and L. Kneip. DEVO: Depth-Event Camera Visual Odometry in Challenging Conditions. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2022. [pdf] [youtube]

L. Gao, Y. Liang, J. Yang, S. Wu, C. Wang, J. Chen, and L. Kneip. VECtor: A Versatile Event-Centric Benchmark for Multi-Sensor SLAM. Robotics and Automation Letters (RAL), 7(3): 8217–8224, 2022. [pdf] [supplementary] [code] [youtube]

Y. Wang, J. Yang, X. Peng, P. Wu, L. Gao, K. Huang, J. Chen, and L. Kneip. Visual Odometry with an Event Camera Using Continuous Ray Warping and Volumetric Contrast Maximization. MDPI Sensors, 22(15):5687, 2022. [pdf] [youtube]

J. Chen, Y. Zhu, D. Lian, J. Yang, Y. Wang, R. Zhang, X. Liu, S. Qian, L. Kneip, and S. Gao. Event-based video frame interpolation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), 2023. [pdf]

L. Gao, H. Su, D. Gehrig, M. Cannici, D. Scaramuzza, and L. Kneip. A 5-Point Minimal Solver for Event Camera Relative Motion Estimation. In Proceedings of the International Conference on Computer Vision (ICCV), 2023. Oral Presentation [pdf] [youtube] [code]

W. Xu, X. Peng, and L. Kneip. Tight Fusion of Events and Inertial Measurements for Direct Velocity Estimation. IEEE Transactions on Robotics (T-RO), 40:240–256, 2023. [pdf]

W. Xu, S. Zhang, L. Cui, X. Peng, and L. Kneip. Event-based visual odometry on non-holonomic ground vehicles. In Proceedings of the International Conference on 3D Vision (3DV), 2024. [pdf] [code] [youtube]

L. Gao, D. Gehrig, H. Su, D. Scaramuzza, and L. Kneip. An n-point linear solver for line and motion estimation with event cameras. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2024. Oral presentation (0.8% acceptance rate!) [pdf] [code] [youtube]

Z. Ren, B. Liao, D. Kong, J. Li, P. Liu, L. Kneip, G. Gallego, and Y. Zhou. Motion and structure from event-based normal flow. In Proceedings of the European Conference on Computer Vision (ECCV), 2024. [pdf]

R. Yuan, T. Liu, Z. Dai, Y.-F. Zuo, and L. Kneip. EVIT: Event-Based Visual-Inertial Tracking in Semi-Dense Maps Using Windowed Nonlinear Optimization. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), 2024. [pdf] [youtube] [code]

Y. Zuo, W. Xu, X. Wang, Y. Wang, and L. Kneip. Cross-Modal Semi-Dense 6-DoF Tracking of an Event Camera in Challenging Conditions. IEEE Transactions on Robotics (T-RO), 40:1600–1616, 2024. [pdf] [code]

Z. Liu, B. Guan, Y. Shang, Q. Yu, and L. Kneip. Line-based 6-DoF Object Pose Estimation and Tracking With an Event Camera. IEEE Transactions on Image Processing (TIP), 33:4765–4780, 2024. [pdf]

T. Liu, R. Yuan, Y. Ju, X. Xu, J. Yang, X. Meng, X. Lagorce, and L. Kneip. GS-EVT: Cross-Modal Event Camera Tracking based on Gaussian Splatting. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2025. [pdf] [code] [youtube]

H. Su, L. Gao, T. Liu, and L. Kneip. Motion-aware optical camera communication with event cameras. Robotics and Automation Letters (RAL), 10(2):1385–1392, 2025. [pdf] [video] [code]

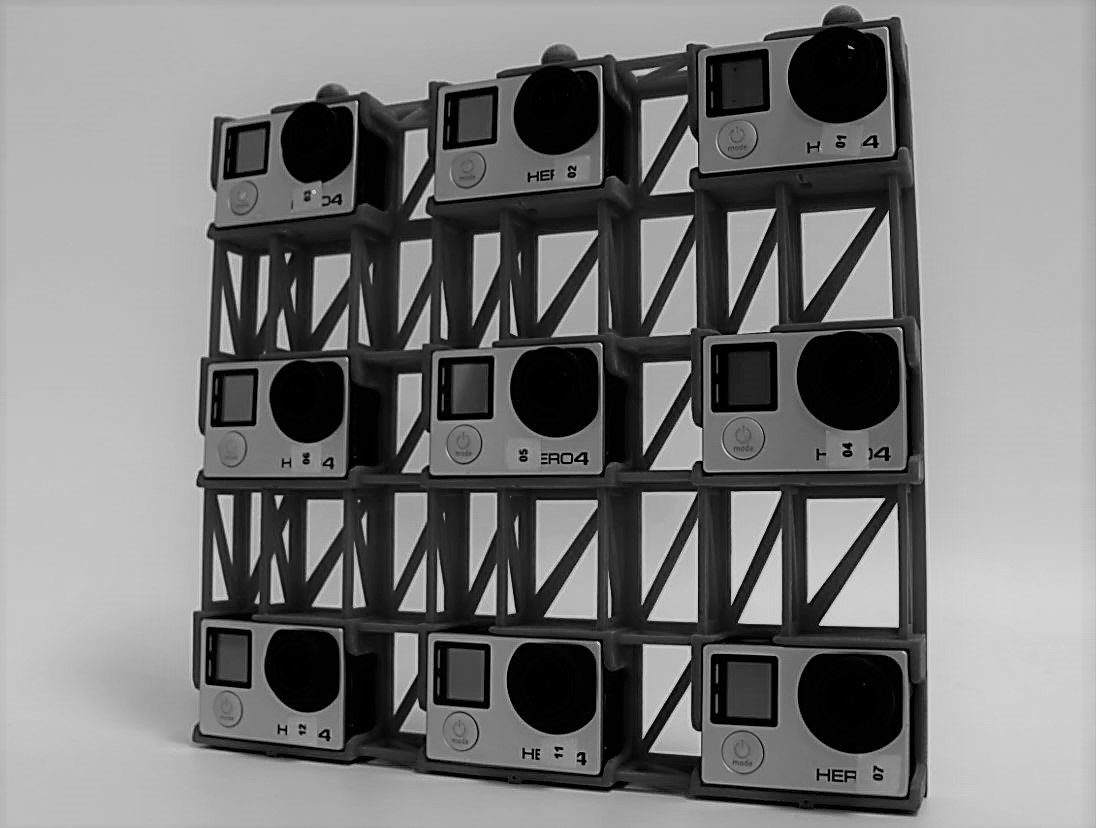

Light-field cameras

Stereo cameras only have a one-dimensional disparity space, which causes depth unobservabilities in situations where gradient edges are primarily aligned with the stereo camera's baseline vector. Light-field cameras represent an interesting, multi-baseline extrapolation of stereo cameras. A typical arrangement consists of a square grid of multiple forward facing cameras, thus enabling disparity measurements in all directions. Through our recent research, we exploit the high redundancy in light-field imagery for accurate, reliable visual SLAM. Our result leads to state-of-the-art tracking accuracy comparable to what is achieved by dense depth camera alternatives.

P. Yu, C. Wang, Z. Wang, J. Yu, and L. Kneip. Accurate line-based relative pose estimation with camera matrices. IEEE Access, 8:88294–88307, 2020. Open access [pdf]