360-degree surround-view multi-perspective cameras

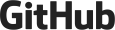

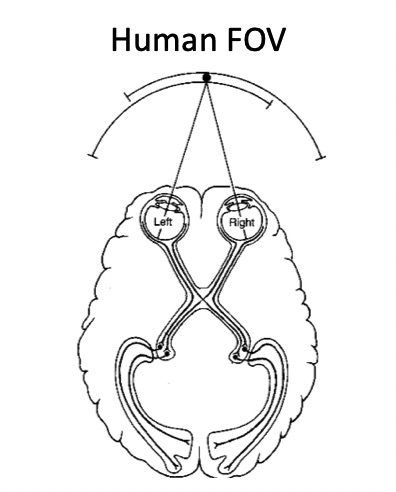

Since Prof. Kneip's early involvement in the EU FP7 project V-Charge, MPL has continued to investigate VSLAM based on multi-perspective camera systems (MPCs), particularly those for which the cameras share only very little overlap in their fields of view. The system commonly occurs on passenger vehicles, which are often equipped with a 360-degree surround view camera system for parking assistance. Given the close-to-market nature of these sensors, it becomes an economically relevant question whether or not MPCs can be used for VSLAM and the solution of certain vehicle autonomy problems. While the tracking accuracy and robustness of vision-based solutions can hardly compete with Lidar based solutions, they may already be enough to solve certain less safety-critical applications such as Autonomous Valet Parking (AVP). Perhaps somewhat surprisingly, MPCs possess the ability to render metric scale observable despite potentially having no overlap in their fields of view. Surround-view MPCs also share the same benefits than any large field-of-view camera, which is a good ability to distinguish rotational and translation motion patterns, and high tracking robustness (MPCs may maintain tracking robustness if for example one of the cameras faces a relatively texture-less part of a scene).

Visual SLAM and odometry

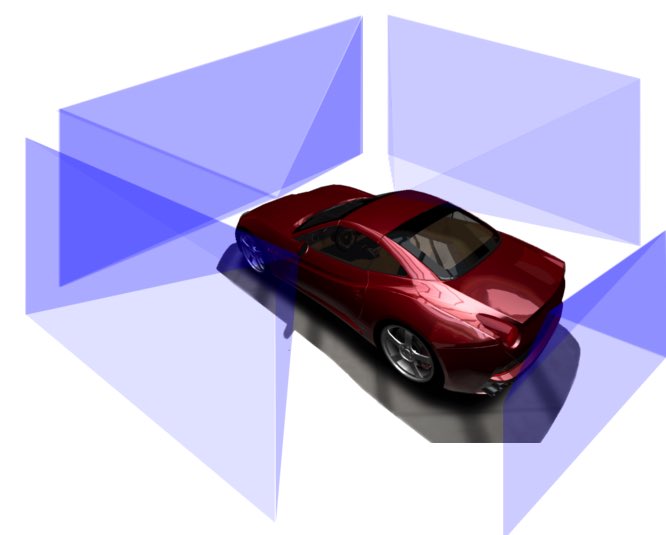

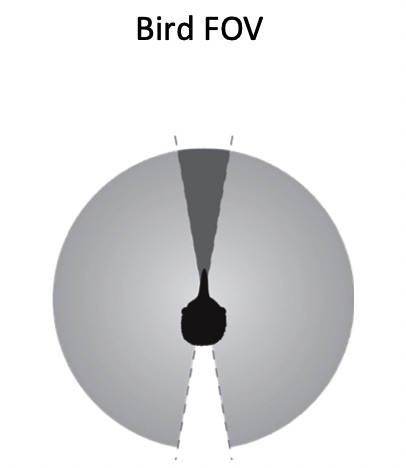

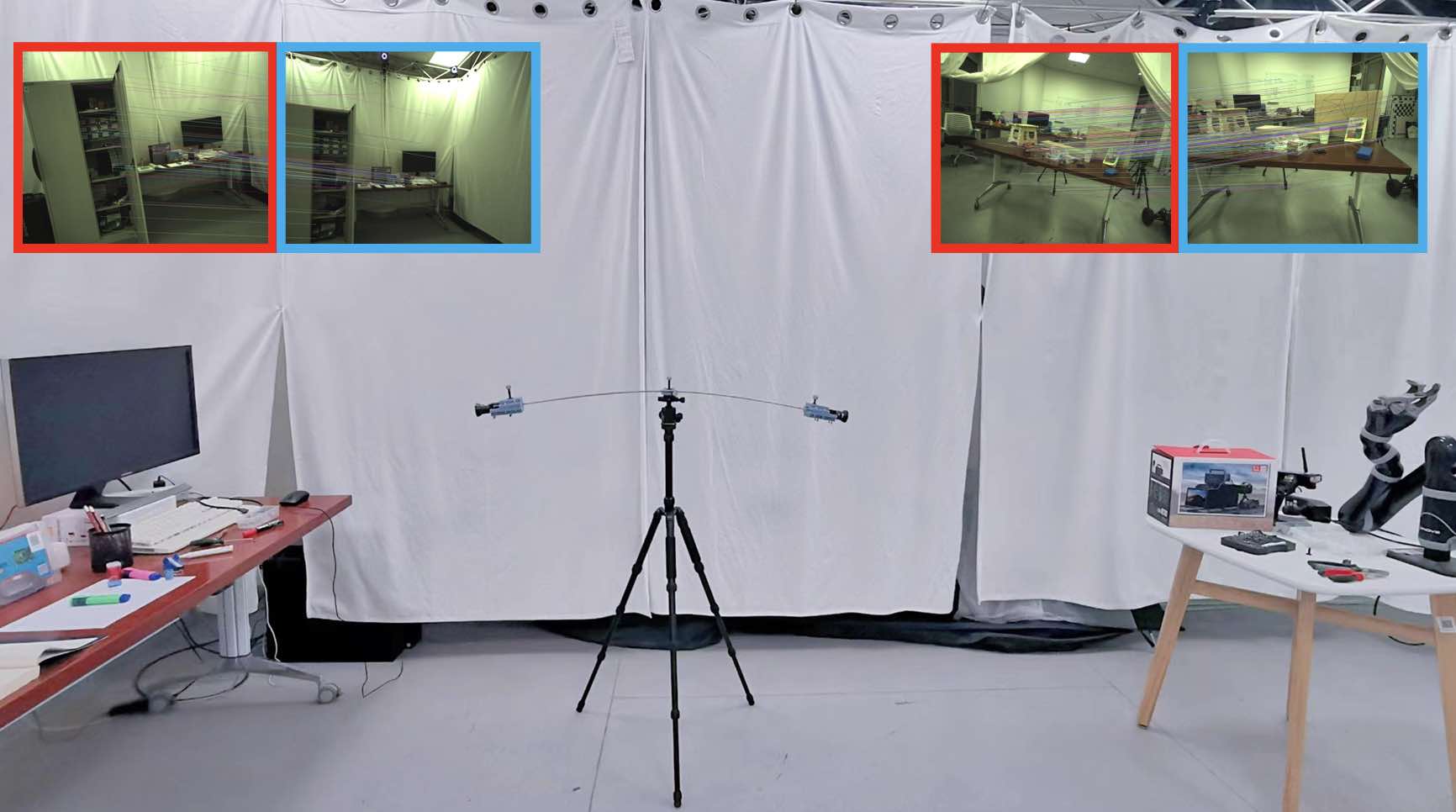

In our early research in 2012, we analysed the fundamental case of a non-overlapping stereo framework, and developed the world's first real-time visual SLAM framework for the completely non-overlapping case. The system is a bio-inspired concept as the arrangement of the eyes of common birds such as pigeons is very comparable to that of a non-overlapping stereo rig.

T. Kazik, L. Kneip, J. Nikolic, M. Pollefeys, and R. Siegwart. Real-time 6D stereo visual odometry with non-overlapping fields of view. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, USA, June 2012

[pdf] [video]Y. Wang and L. Kneip. On scale initialization in non-overlapping multi-perspective visual odometry. In Proceedings of the International Conference on Computer Vision Systems, Shenzhen, July 2017. Best Student Paper Award

More recently, we have explored novel solutions to direct frame-to-frame relative displacement calculations again using multi-perspective surround-view cameras. They are very effective in producing a robust visual odometry result for passenger vehicles.

Y. Wang, K. Huang, X. Peng, H. Li, and L. Kneip. Reliable frame-to-frame motion estimation for vehicle-mounted surround-view camera systems. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020 [youtube] [bilibili]

Influence in industry

MPL alumni work with Motovis Intelligent Technologies Ltd. here in Shanghai towards the development of smart car functionalities, in particular "Home-Zone Parking (HZP)" and "Automated Valet Parking (AVP)". Here, MPL research has inspired the development of highly successful prototype solutions that are able to run real-time SLAM on non-overlapping multi-camera systems and embedded hardware, and thus enable cars to drive by themselves. The solutions use both sparse and higher-level semantic features obtained from FPGA front-end units to generate high-definition maps in near real-time. During internships, MPL members have furthermore collaborated with Motovis on the development of online calibration methods for non-overlapping multi-camera systems, which notably do not require the usage of additional calibration hardware. Furthermore, a novel surround-view camera SLAM system was developed which takes into account a continuous kinematic vehicle model as a motion model in the backend optimizer.

Z. Ouyang, L. Hu, Y. Lu, Z. Wang, X. Peng, and L. Kneip. Online calibration of exterior orientations of a vehicle-mounted surround-view camera system. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020

K Huang, Y Wang, and L Kneip. B-splines for Purely Vision-based Localization and Mapping on Non-holonomic Ground Vehicles. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2021 [pdf] [video]

Fundamental geometric problems

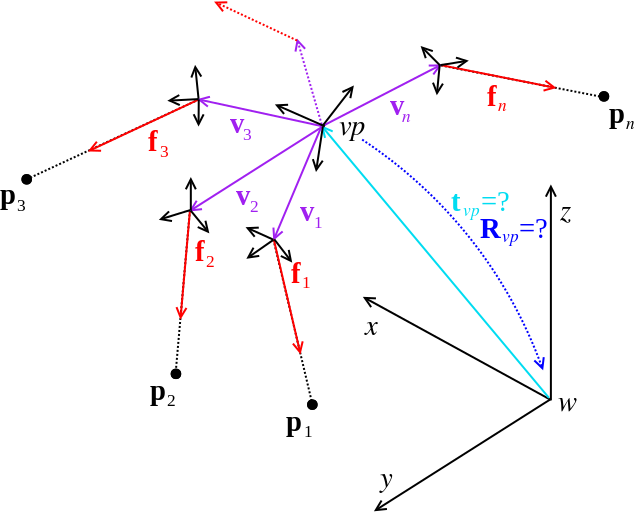

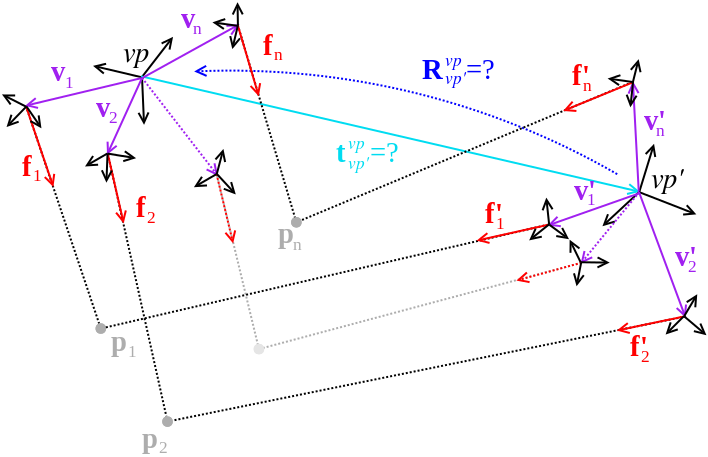

MPL is among the global leaders in the handling of MPCs, and has put strong emphasis on the development of fundamental geometric pose calculation algorithms for non-central (including generalized, multi-perspective, and non-overlapping) camera systems. More information on this can be found on our research page on geometric solvers, and most solvers can be found in our open-source project OpenGV.

L Kneip, P Furgale, and R Siegwart. Using multi-camera systems in robotics: efficient solutions to the NPnP problem. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Karlsruhe, Germany, May 2013. Best computer vision paper finalist [pdf]

L Kneip, H Li, and Y Seo. UPnP: An optimal O(n) solution to the absolute pose problem with universal applicability. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, September 2014

L Kneip and H Li. Efficient computation of relative pose for multi-camera systems. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, USA, June 2014 [pdf]

J. Zhao, W. Xu, and L. Kneip. A certifiably globally optimal solution to generalized essential matrix estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, June 2020 [pdf] [youtube] [bilibili]

J Zhao, B Guan, and L Kneip. Six-point method for multi-camera systems with reduced solution space. In Proceedings of the European Conference on Computer Vision (ECCV), 2024 [pdf]

Summary contributions:

L Kneip and P Furgale. OpenGV: A unified and generalized approach to calibrated geometric vision. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, May 2014 [pdf] [code] [doc]

L Kneip. Real-Time Scalable Structure from Motion: From Fundamental Geometric Vision to Collaborative Mapping. PhD thesis, ETH Zurich, 2012. ETH Dissertation No. 20628 [pdf]

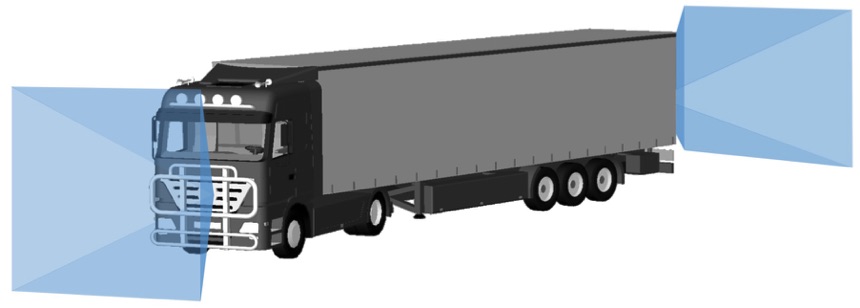

Articulated multi-perspective cameras

An intricate case of a Multi-Perspective Camera (MPC) occurs when the cameras are distributed over an articulated body, a scenario that for example occurs in vision-based applications on a truck where additional cameras have been installed on the trailer for the sake of reinstalling omni-directional perception abilities. We call the result an Articulated Multi-Perspective Camera (AMPC). Through our research, we have shown that AMPC motion renders the internal articulation joint state observable, and optimization over all parameters (i.e. relative displacement and joint configuration both before and after a displacement) is possible and enhances motion estimation accuracy with respect to using the cameras on each rigid part alone.

X. Peng, J. Cui, and L. Kneip. Articulated multi-perspective cameras and their application to truck motion estimation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Macau, China, November 2019 [youtube] [bilibili]

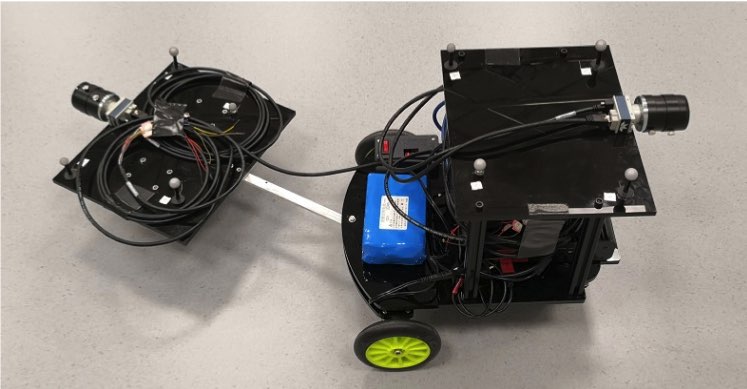

Nonrigid multi-perspective cameras

A yet more exotic case is given if the cameras remain exteroceptive and are mounted on a non-rigid, flexible support structure. There are several scenarios in which this could happen, for example if the cameras are mounted to the tips of a fixed wing UAV, or on a compliant, flexible robot. At MPL, we have started to investigate this case and answer the question whether or not generalized camera motion estimation with such a setup would still be possible. As it turns out, a physical deformation model of the structure that depends on latent variables can be included into the estimation, thereby rendering relative pose estimation a solvable problem. For example, in the static case, we may include the simple cantilever model which depends on the orientation with respect to gravity, thereby transforming the multi-camera rig into a passive inertial sensing device.

M. Li, J. Yang, and L. Kneip. Relative pose for nonrigid multi-perspective cameras: The static case. In Proceedings of the International Conference on 3D Vision (3DV), 2024. Oral presentation [pdf]