Visual SLAM

In the past, MPL members have contributed to a large number of visual tracking and mapping solutions based on different kinds of sensors and scenarios. The increasing demand for real-time high-precision visual tracking and mapping solutions for navigation and localization on computationally constrained platforms has notably been driving research towards more versatile and scalable solutions. Visual tracking and mapping solutions in the present context refer to either simple local tracking solution or complete Simultaneous Localization And Mapping (SLAM) systems. In terms of representations, the below list of systems encompasses both sparse or semi-dense low-level solutions as well as higher-level solutions that operate at the level of objects.

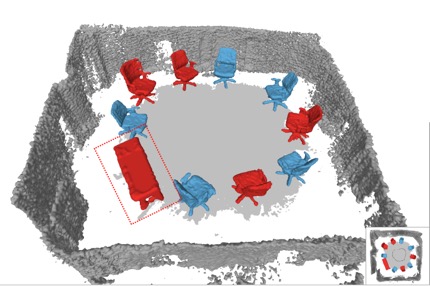

Collaborative visual SLAM with multiple MAVs

In this work we extended visual odometry front-end nodes by a collaborative, remote structure-from-motion back-end node. The main idea is to process the keyframes generated by multiple visual odometry nodes running on multiple cameras in parallel (e.g. each camera may be mounted on a drone which is part of a swarm), and merge them in one global map (e.g. on a remotely connected ground station). By reusing the relative keyframe orientation information from the visual odometry nodes, the task of the collaborative structure-from-motion back-end is mainly reduced to global optimization, overlap detection, loop closure, and merging of submaps. The concurrent design allows to handle all these tasks in parallel. The system runs in real-time, and it has been tested and applied to videos captured by a swarm of micro aerial vehicles.

C Forster, S Lynen, L Kneip, and R Siegwart. Collaborative visual slam with multiple mavs. In Workshop on Integration of Perception and Control for Resource-Limited, Highly Dynamic, Autonomous Systems (RSS), Sydney, Australia, July 2012

C Forster, S Lynen, L Kneip, and D Scaramuzza. Collaborative monocular SLAM with multiple micro aerial vehicles. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Tokyo, Japan, November 2013 [video]

Semi-dense visual tracking with RGB-D cameras

This work introduces a novel strategy for semi-dense, real-time monocular camera tracking. We employ a geometric iterative closest point technique instead of a photometric error criterion, which has the conceptual advantage of requiring neither isotropic enlargement of the employed semidense regions, nor pyramidal subsampling. We outline the detailed concepts leading to robustness and efficiency even for large frame-to-frame disparities, and demonstrate successful real-time processing over very large view-point changes and significantly corrupted semi-dense depth-maps. The method is applied to RGB-D camera tracking.

Y Zhou, H Li, and L Kneip. Canny-VO: Visual Odometry with RGB-D Cameras based on Geometric 3D-2D Edge Alignment. IEEE Transactions on Robotics (T-RO), 35(1):1–16, 2019 [pdf]

Y Zhou, L Kneip, and H Li. Semi-dense Visual Odometry for RGB-D Cameras using Approximate Nearest Neighbour Fields. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Singapore, May 2017 [pdf]

L Kneip, Y Zhou, and H Li. SDICP: Semi-dense tracking based on iterative closest points. In Proceedings of the British Machine Vision Conference (BMVC), Swansea, UK, August 2015 [pdf]

Very fast tracking of depth sensors in structured environments

This work aims at a highly efficient solution to the point set registration problem in the particular case where the point sets have been collected in Manhattan worlds. Manhattan worlds are characterised by the fact that we may have a primarily piece-wise planar structure where the various plane pieces have a normal vector that is aligned with one of three primary orthogonal directions. Our efficient idea for point set registration is then given by a two step approach in which we first identify rotation and then translation. In order to identify the rotation, we extract normal vectors for each point in the point cloud and try to fit the Manhattan reference frame. This procedure even gives us absolute orientation information for each point set. We then rotate the point sets into the Manhattan frame and project them onto the basis axes. This gives characteristic density signals that can be aligned individually for each translation dimension. The approach is used for RGB-D tracking in Manhattan worlds and achieves frame-rates over 100Hz on regular CPUs. We have furthermore extended the work and relaxed the Manhattan world assumption to more general piece-wise planar environments.

Y Zhou, L Kneip, C Rodriguez, and H Li. Divide and conquer: Efficient density-based tracking of 3d sensors in manhattan worlds. In Proceedings of the Asian Conference on Computer Vision (ACCV), Taipei, Taiwan, November 2016b. Oral presentation [pdf] [code]

Y Zhou, L Kneip, and H Li. Real-Time Rotation Estimation for Dense Depth Sensors in Piece-wise Planar Environments. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Deajeon, Korea, October 2016 [pdf] [video]

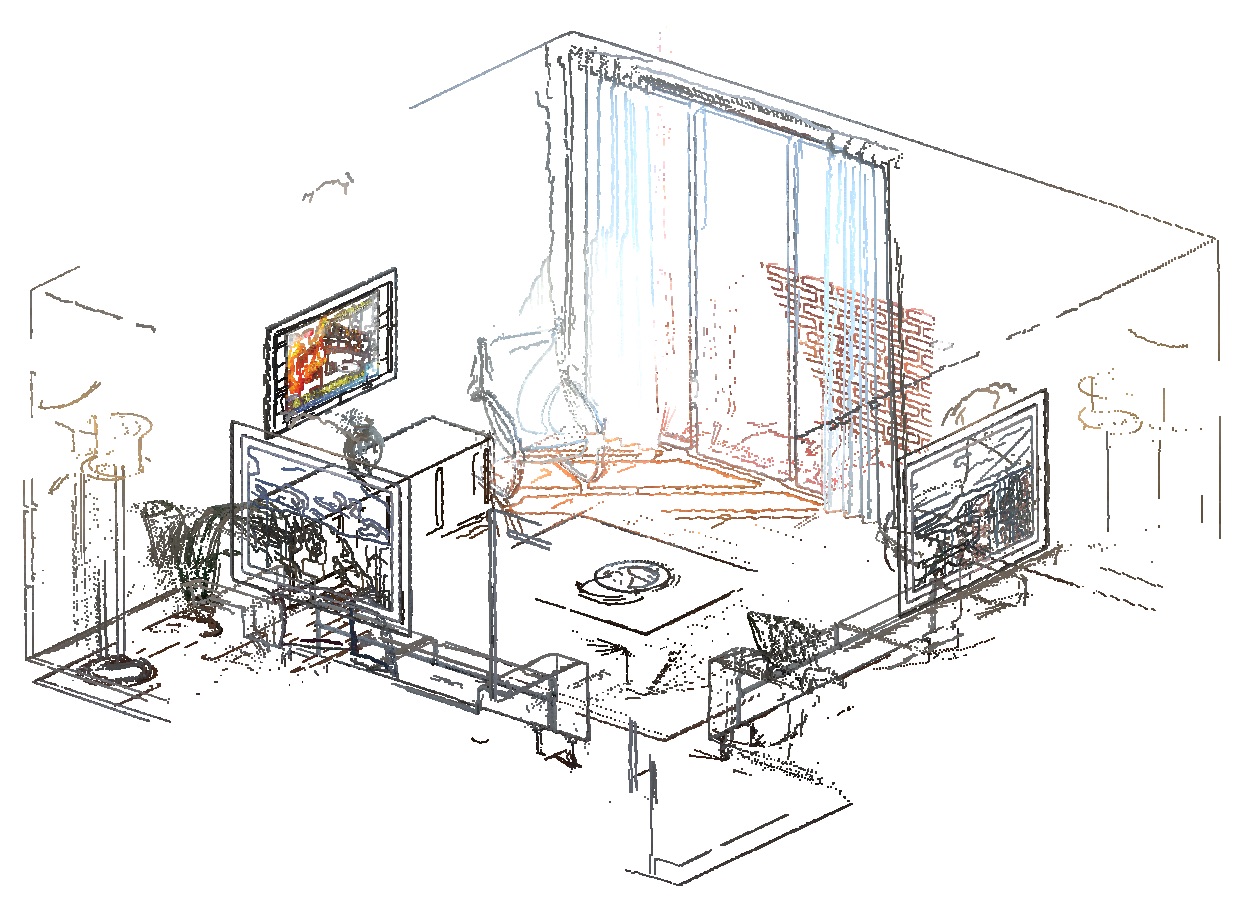

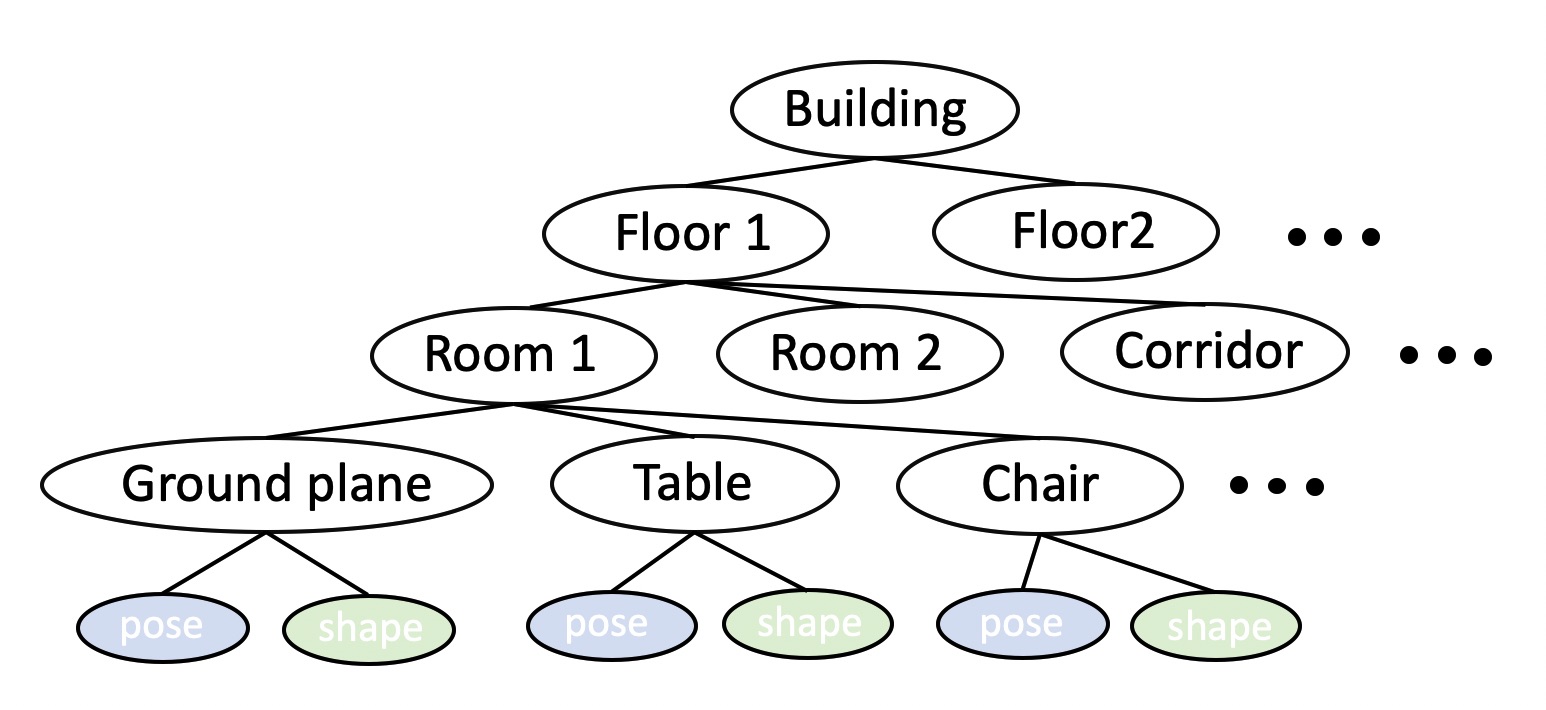

Semantic SLAM

We continuously aim at developing novel visual SLAM solutions in which the generated models of the environment cover everything reaching from low-level geometry to higher level aspects such as scene composition, object poses or shapes, dynamics, and semantic meaning. One such example is given by Deep-SLAM++. It employs object detectors to load and register full 3D models of objects. However, rather than just using fixed CAD models, we extend the idea to environments with unknown objects and only impose object priors by employing modern class-specific neural networks to generate complete model geometry proposals. The difficulty of using such predictions in a real SLAM scenario is that the prediction performance depends on the view-point and measurement quality, with even small changes of the input data sometimes leading to a large variability in the network output. We propose a discrete selection strategy that finds the best among multiple proposals from different registered views by re-enforcing the agreement with the online depth measurements. The result is an effective object-level RGBD SLAM system that produces compact, high-fidelity, and dense 3D maps with semantic annotations.

L Hu, W Xu, K Huang, and L Kneip. Deep-SLAM++: object-level RGBD SLAM based on class-specific deep shape priors. ArXiv e-prints, 2019 [pdf]

Visual inertial SLAM

Besides images, this framework takes short-term full 3D relative rotation information from an Inertial Measurement Unit (IMU) into account. This supports the geometric computation and reliably reconstructs the traversed trajectory, even in situations of increased dynamics. Similar sensor-setups are available in all modern smart phones and micro-aerial vehicles. The presented minimal geometric solutions do not suffer from geometric degeneracies, always return a unique solution. The proposed solvers use at most 2 correspondences, and are extremly fast to be embedded into Ransac, thus increasing the robustness and efficiency of the algorithm. Combined with a windowed optimization back-end over keyframes, it achieves a very fast and robust solution for real-time 6 DoF tracking and mapping. The solution has been deployed on a small drone where it is able to estimate aggressive human pilot maneuvers in real-time on regular embedded CPU architectures.

L Kneip, M Chli, and R Siegwart. Robust real-time visual odometry with a single camera and an IMU. In Proceedings of the British Machine Vision Conference (BMVC), Dundee, Scotland, August 2011a. Oral presentation [pdf] [video]

Robust and efficient Lidar localization

In this work we present a tightly-coupled multi-sensor fusion architecture for autonomous vehicle applications, which achieves centimetre-level accuracy and high robustness in various scenarios. In order to realize robust and accurate point-cloud feature matching we propose a novel method for extracting accurate structural features from LiDAR point-clouds. High frequency motion prediction and noise propagation is achieved by incremental on-manifold IMU pre-integration. We also adopt a multi-frame sliding window square root inverse filter, such that the system maintains numerical stable under the premise of limited power consumption. The methodology is verified in multiple applications and on multiple different autonomous vehicle platforms. Our fusion framework achieves state-of-the-art localization accuracy, high robustness and a good generalization ability. Results include challenging tests on an agressivley moving indoor platform exerting drifting motion on slippery ground.

K. Li, Z. Ouyang, L. Hu, D. Hao, and L. Kneip. Robust SRIF-based LiDAR-IMU Localization for Autonomous Vehicles. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2021. [youtube] [bilibili]

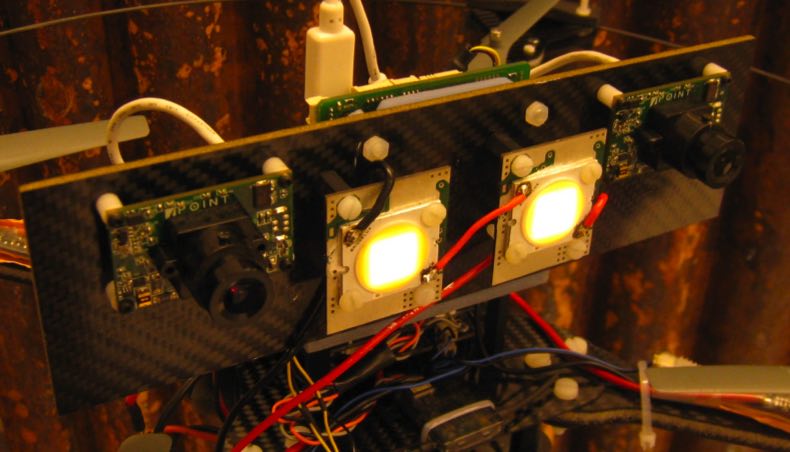

Stereo visual SLAM

A highly popular choice in many existing smart mobile systems is given by a stereo camera. A stereo camera consists of two synchronized cameras that are laterally displaced with respect to each other. By knowing the extrinsic transformation between both views, the result of either sparse or dense image matching may be readily converted into depth cues. Thus, a stereo vision system does not depend on kinematic depth measurements, but can instantaneously provide metric depth information for different points in the scene. As a result, stereo vision frameworks often perform more robustly than monocular alternatives. They furthermore do not suffer from scale unobservability as long as the ratio between baseline between the two cameras and the average depth of the scene does not become too small. MPL's director has contributed to two stereo frameworks designated for VSLAM (Visual Simultaneous Localization And Mapping) running on embedded drone-mounted hardware and an underwater system, respectively.

R Voigt, J Nikolic, C Hürzeler, S Weiss, L Kneip, and R Siegwart. Robust embedded egomotion estimation. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), San Francisco, USA, September 2011 [pdf]

J Zhang, V Ila, and L Kneip. Robust visual odometry in underwater environment. In OCEANS’18 MTS/IEEE Kobe, Kobe, Japan, May 2018 [pdf]

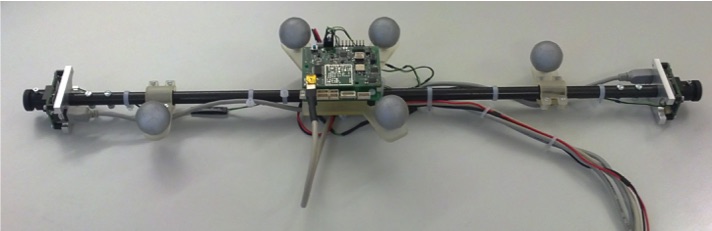

Tracking and mapping with a non-overlapping stereo rig

Since Prof. Kneip's early involvement in the EU FP7 project V-Charge, MPL has continued to investigate VSLAM based on multi-perspective camera systems (MPCs), particularly those for which the cameras share only very little overlap in their fields of view. The system commonly occurs on passenger vehicles, which are often equipped with a 360-degree surround view camera system for parking assistance. Given the close-to-market nature of these sensors, it becomes an economically relevant question whether or not MPCs can be used for VSLAM and the solution of certain vehicle autonomy problems. While the tracking accuracy and robustness of vision-based solutions can hardly compete with Lidar based solutions, they may already be enough to solve certain less safety-critical applications such as Autonomous Valet Parking (AVP). Perhaps somewhat surprisingly, MPCs possess the ability to render metric scale observable despite potentially having no overlap in their fields of view. Surround-view MPCs also share the same benefits than any large field-of-view camera, which is a good ability to distinguish rotational and translation motion patterns, and high tracking robustness (the extended field of view is especially beneficial in poorly textured environments). MPL is among the global leaders in the handling of MPCs, and has put strong emphasis on the development of fundamental geometric pose calculation algorithms for non-central (including multi-perspective) camera systems. More information on this can be found on our research page on geometric solvers, and most solvers can be found in our open-source project OpenGV. Starting from a simple non-overlapping stereo visual odometry framework, we have developed full-scale systems for 360-degree MPCs. For all details on this work including our collaboration with industry, the reader is kindly invited to look our page on 360-degree MPCs.

T Kazik, L Kneip, J Nikolic, M Pollefeys, and R Siegwart. Real-time 6D stereo visual odometry with non-overlapping fields of view. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, USA, June 2012 [pdf] [video]

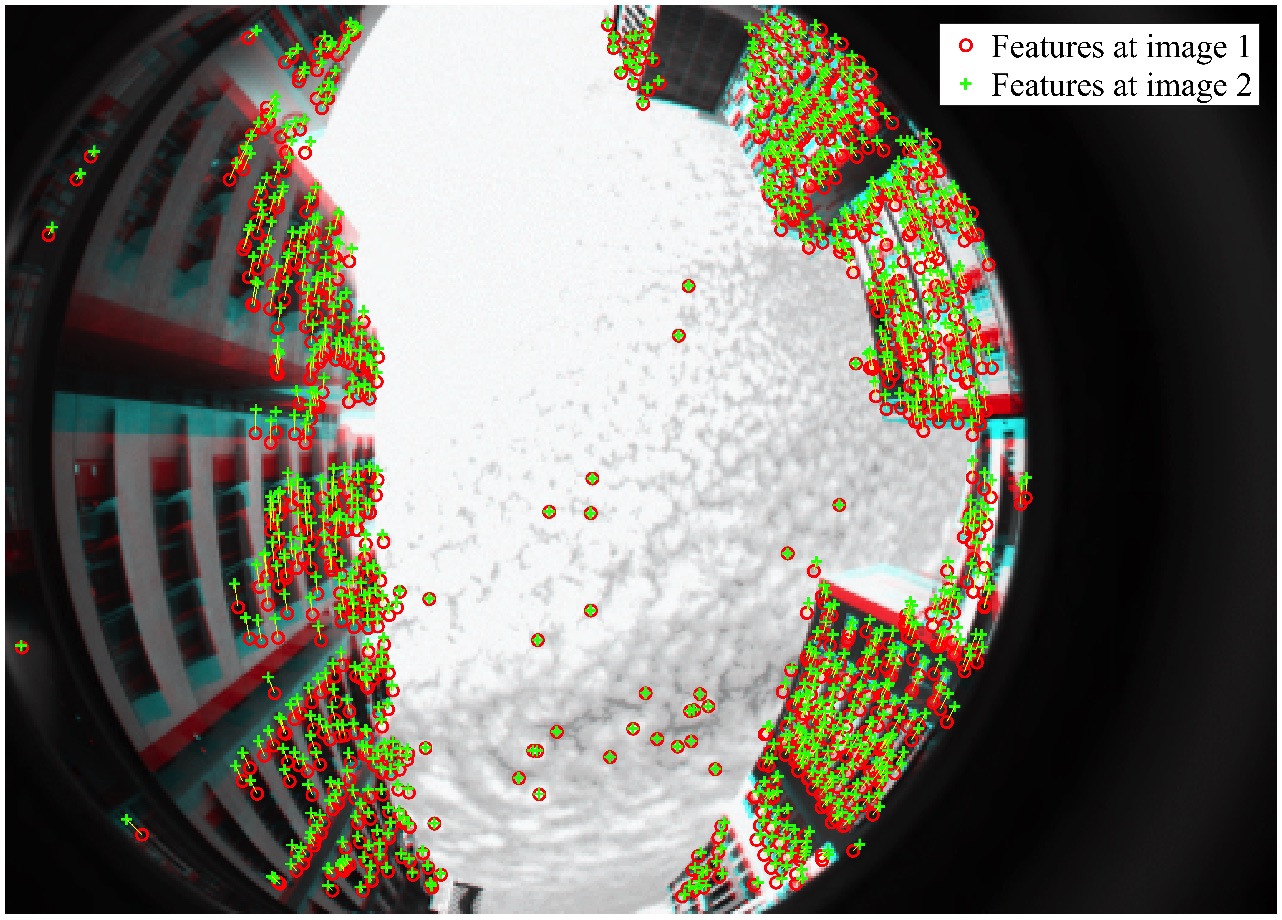

SLAM with omni-directional cameras

Similar to MPCs, omni-directional cameras (e.g. catadioptric cameras) have the advantage of multi-directional observations given by an enlarged field of view. The resulting benefits are a potential increase in the available features for tracking and the distinctiveness of rotational and translation induced optical flow patterns. Omni-directional cameras therefore have the potential advantage of improved motion estimation accuracy and robustness over regular, monocular cameras. One particularity of omni-directional cameras is that the field of view may exceed 180 degrees, and that–as a result–normalized measurements may have to be expressed as a general 3D bearing vector rather than a 2D (homogeneous) point on the normalized image plane. It is worthwhile to note that all algorithms in OpenGV have been consequently designed to operate with 3D bearing vectors, and are thus ready to be applied to (calibrated) omni-directional cameras. We have furthermore collaborated on a full, MSCKF based visual odometry framework for omni-directional measurements.

M Ramezani, K Koshelham, and L Kneip. Omnidirectional Visual-Inertial Odometry Using Multi-State Constraint Kalman Filter. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), Vancover, Canada, September 2017 [pdf]

Line-based SLAM with a camera matrix

Stereo cameras only have a one-dimensional disparity space, which causes depth unobservabilities in situations where gradient edges are primarily aligned with the stereo camera's baseline vector. Light-field cameras represent an interesting, multi-baseline extrapolation of stereo cameras. A typical arrangement consists of a square grid of multiple forward facing cameras, thus enabling disparity measurements in all directions. Through our recent research, we exploit the high redundancy in light-field imagery for accurate, reliable line-based visual SLAM. Our result leads to state-of-the-art tracking accuracy comparable to what is achieved by dense depth camera alternatives.

Peihong Yu, Cen Wang, Zhirui Wang, Jingyi Yu, and Laurent Kneip. Accurate line-based relative pose estimation with camera matrices. IEEE Access, 8:88294–88307, 2020. Open access [pdf]