Camera Calibration

Calibration is an essential problem to be solved for many visual perception problems, in particular geometric problems such as Simultaneous Localization And Mapping (SLAM). At MPL, we have looked at a number of camera calibration problems reaching from intrinsic calibration of single regular, rolling shutter, and event cameras down to the calibration of the extrinsic transformation parameters of a multi-perspective camera system.

Intrinsic calibration from natural images

We have collaborated on the development of a Direct Least Squares (DLS) solution to the absolute pose problem with partial calibration. The method is able to identify the focal length of the camera besides the actual camera pose.

Y Zheng and L Kneip. A direct least-squares solution to the pnp problem with unknown focal length. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, USA, June 2016 [pdf]

Rolling shutter camera calibration

Most consumer-grade cameras are not equipped with a global shutter mechanism, but are capturing images line-by-line. We call this the mechanism a rolling shutter, and it is used across a wide range of consumer electronic devices, from smart-phones to high-end cameras. This leads to image distortions if either scene or–more importantly in the context of our research agenda–camera are in motion while an image is captured. The distortions are furthermore becoming more important as the dynamics of the camera are increasing. In order to perform accurate visual SLAM with a rolling shutter camera, we therefore often employ continuous-time trajectory models. Knowing the image row in which a certain measurement is located, and the exact time that row has been captured, each measurement residual error may then be formulated as a function of the camera pose at the very moment that row has been captured.

A pre-requirement for the utilization of such continuous-time trajectory models is accurate knowledge about the shutter timing, meaning the intra-line capturing delay. In the following work, we exploit the fact that the line delay is in fact observable as a function of residual reprojection errors. We therefore present a new method that only requires video of a known calibration pattern. The core contribution of the method is given by an error standardization method that propagates the measurement noise into the residual error space. This procedure is non-trivial because the measurement is also used in order to define the row and thus the time-instant of a measurement residual. Noise in the measurement therefore translates into an error in the said time-instant.

L Oth, P T Furgale, L Kneip, and R Siegwart. Rolling shutter camera calibration. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Portland, USA, June 2013 [pdf]

Dynamic event camera calibration

Code Available here!

Event cameras are bio-inspired sensors that do not capture images frame-by-frame, but capture changes in the logarithmic intensity independently and asynchronously at each pixel. Changes are returned in the discretized form of events that simply indicate a change of the logarithmic intensity by a certain quantity. Each event is characterized by a pixel location and a time-stamp with high temporal resolution. For more information on event cameras, please visit the respective page on event camera research.

MPL has developed a novel open-source event camera calibration framework. Camera calibration is an important prerequisite towards the solution of 3D computer vision problems. Traditional methods rely on static images of a calibration pattern. This raises interesting challenges towards the practical usage of event cameras, which notably require image change to produce sufficient measurements. The current standard for event camera calibration therefore consists of using flashing patterns. They have the advantage of simultaneously triggering events in all reprojected pattern feature locations, but it is difficult to construct or use such patterns in the field. We present the first dynamic event camera calibration algorithm. It calibrates directly from events captured during relative motion between camera and calibration pattern. The method is propelled by a novel feature extraction mechanism for calibration patterns, and leverages existing calibration tools before optimizing all parameters through a multi-segment continuous-time formulation. The resulting calibration method is highly convenient and reliably calibrates from data sequences spanning less than 10 seconds.

K. Huang, Y. Wang, and L. Kneip. Dynamic Event Camera Calibration. In Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems (IROS), 2021. [pdf] [code] [youtube] [bilibili]

Calibration of extrinsic paramters

An important problem towards the practical use of 360-degree surround view cameras is extrinsic calibration between the cameras, which is challenging due to the often reduced overlap between the fields of view of neighbouring views. MPL has developed several methods for non-overlapping MPC calibration:

Infra-structure based calibration:

In our CVPR'12 work on non-overlapping stereo visual tracking and mapping, we have employed an infra-structure based calibration that relies on an external motion capture system. The MPC is placed statically in the center of the motion tracking arena, and a checkerboard equipped with tracking markers is moved individually in front of both cameras. Using hand-eye calibration, this procedure enables the derviation of the absolute pose of each camera w.r.t. to the motion tracking system, and thus indirectly also the relative pose between the two cameras.

T Kazik, L Kneip, J Nikolic, M Pollefeys, and R Siegwart. Real-time 6D stereo visual odometry with non-overlapping fields of view. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, USA, June 2012 [pdf] [video]

Mirror-based calibration:

The method again focusses on the non-overlapping stereo rig. By using a mirror in front of one camera and placing the checkerboard in front of the other camera, the calibration pattern can be rendered visible in both cameras at the same time. Extrinsic calibration is enabled by a dedicated solver that simultaneously infers the camera pose and mirror plane parameters.

G Long, L Kneip, X Li, X Zhang, and Q Yu. Simplified mirror-based camera pose computation via rotation averaging. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, USA, June 2015 [pdf]

Self-calibration from natural scenes:

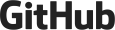

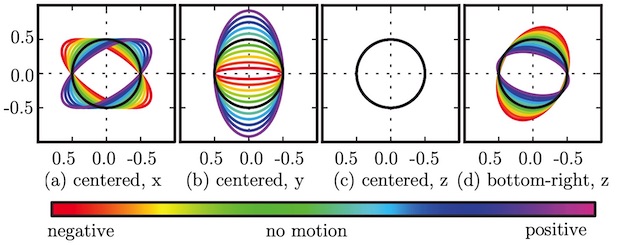

The final method works with regular images and for MPCs mounted on planar ground vehicles that obey the Ackermann steering model. It is motivated by two insights. First, we argue that the accuracy of vision-based vehicle motion estimation depends crucially on the quality of exterior orientation calibration, while design parameters for camera positions typically provide sufficient accuracy. The method hence only calibrated rotations. Second, we demonstrate how planar vehicle motion related direction vectors can be used to accurately identify individual camera-to-vehicle rotations, which are more useful than the commonly and tediously derived camera-to-camera transformations. It is in particular for Ackermann vehicles that the translation direction in the zero rotation case becomes equivalent to the vehicle forward direction.

Z. Ouyang, L. Hu, Y. Lu, Z. Wang, X. Peng, and L. Kneip. Online calibration of exterior orientations of a vehicle-mounted surround-view camera system. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, May 2020